Linx Blog

The ShinyHunters Playbook: Why Identity Has Become The New Attack Surface

Another week, another ShinyHunters headline.

First Canvas. Then 7-Eleven and Charter Communications. A roughly combined 317.2 million identities compromised in these three breaches, just in the last month alone. The details of these incidents are still emerging, and in moments like this it is important not to overstate what we know. But what is already clear is that the same attacker name keeps showing up in conversations about data theft, extortion, SaaS platforms, and trusted enterprise access.

That is the part worth paying attention to.

The security industry still talks about breaches as if attackers are always finding more sophisticated ways to break in. Sometimes they are. But increasingly, the story is different. Attackers are not always breaking through the front door. They are finding identities that already have keys.

What if the real story is not that attackers have become dramatically better at bypassing the enterprise?

What if the real story is that attackers have become exceptionally good at using the trust enterprises have already created?

That is what makes ShinyHunters such an important case study. Not because the group is unique in every tactic it uses, and not because every incident follows the exact same pattern. They are important because their campaigns keep exposing the same uncomfortable truth: identity has become one of the most valuable attack surfaces in the modern enterprise.

The Pattern Behind the Headlines

The recent Canvas incident is still being investigated, but it has already shown the operational impact of compromising a widely used digital platform. Instructure, the company behind Canvas, said it reached an agreement with the attackers to have stolen data deleted, though the company did not disclose the terms or confirm whether a ransom was paid. The attackers had claimed access to data tied to millions of students, teachers, and staff, and the incident disrupted schools during one of the most sensitive windows of the academic year.

The 7-Eleven incident followed a different path. The company confirmed unauthorized access to systems used to store franchisee documents. Reports linked the incident to ShinyHunters and described exposed franchise applicant data, including sensitive personal information. Some reports said stolen files were published after 7-Eleven declined to pay.

Different organizations. Different environments. Different circumstances.

The economics look familiar.

Sensitive data is exposed. Operations are disrupted. Organizations are pressured to negotiate. Customers, students, employees, partners, or applicants are left wondering where their information went and what happens next.

This is why these attacks continue. They work. The criminal economy around data theft and extortion is not built on novelty. It is built on repeatability. Attackers do not need a new zero-day every week if they can find exposed credentials, overprivileged accounts, vulnerable integrations, weakly governed service accounts, or trusted access paths that lead to valuable data.

That is the pattern behind the headlines.

Why Snowflake Changed the Conversation

The most important ShinyHunters campaign was not the one that happened in the last month.

It was Snowflake.

When the Snowflake campaign became public, the initial instinct across the industry was to look for the platform flaw. That is how we have been trained to think about breaches. A major cloud platform is involved, large volumes of customer data are exposed, and the first question becomes: what vulnerability did the attacker exploit?

But the Snowflake story was more instructive than that. Mandiant said it found no evidence that unauthorized access stemmed from a breach of Snowflake’s enterprise environment. Instead, the incidents it investigated were traced back to compromised customer credentials. In many cases, those credentials had been previously stolen by infostealer malware. Some accounts reportedly lacked multi-factor authentication. Attackers then used those legitimate credentials to access customer Snowflake instances, enumerate data, and exfiltrate information.

That should have changed the industry conversation.

The attack did not begin with a vulnerability in the traditional sense. It began with an identity. The attackers did not need to defeat every control in the environment. They needed to find a trusted path that already existed.

From there, the playbook becomes familiar. Use stolen credentials. Access a cloud or SaaS environment. Determine what the identity can reach. Locate high-value data. Extract it. Extort the victim.

The most important lesson from Snowflake was not that attackers had become better at breaking in. It was that attackers had become better at using the trust we already created for them.

That distinction matters because it changes how defenders need to think. If the threat is only exploitation, then the solution is patching, hardening, and vulnerability management. Those things still matter. But if the threat is trusted access being misused, the problem becomes much broader. It becomes a question of identity governance, privilege, context, monitoring, and trust.

Snowflake became one of the clearest examples of a larger shift already underway. The enterprise attack surface is no longer defined only by networks, endpoints, and applications. It is increasingly defined by identities and the access paths those identities create.

What ShinyHunters Understands

Groups like ShinyHunters understand something many organizations are still struggling to operationalize: the fastest path to valuable data is often not through infrastructure. It is through identity.

This does not mean every ShinyHunters incident is purely an identity attack. Real-world breaches are messier than that. They involve social engineering, stolen credentials, exposed systems, third-party platforms, weak configurations, and gaps in monitoring. But across many of these campaigns, the theme is consistent. The attacker looks for a way to inherit trust.

That trust may come from a stolen employee credential. It may come from a compromised contractor account. It may come from an account without MFA. It may come from a service account that has accumulated far more access than anyone realizes. It may come from a SaaS integration connected to sensitive data. It may come from an identity provider relationship, an API token, or an admin workflow that was designed for speed and convenience rather than adversarial use.

Attackers do not care whether an identity belongs to a human, a workload, a third-party integration, or an AI agent. They care whether that identity can take them somewhere valuable.

That is the shift.

For a long time, identity was treated primarily as a control for access. Authenticate the user. Grant the right permission. Remove access when someone leaves. Review access periodically for compliance. That model made sense when the enterprise was simpler, applications were fewer, and most access was tied to human employees inside relatively well-defined boundaries.

That world no longer exists.

The Identity Explosion Nobody Is Talking About

The average enterprise has lost track of how many identities it actually has.

Not users. Identities.

There was a time when identity mostly meant employees. Then contractors became a meaningful part of the workforce. Then partners and vendors were brought into internal systems. Then SaaS applications exploded. Then cloud infrastructure created workloads, roles, and service accounts at a scale most governance programs were not designed to handle. Then APIs and third-party integrations connected systems that were never originally designed to work together. Now AI agents are entering the environment, taking actions on behalf of users, systems, and business processes.

Every one of those changes created new identities. Every new identity created permissions. Every permission created trust. Every trust relationship created a possible attack path.

This is the part many organizations cannot see clearly. They may know how many employees they have. They may know how many applications they manage. They may have a list of privileged users in one system or a quarterly access review process in another. But far fewer can answer the more important question: how many identities exist across the entire environment, what can they reach, and how do they connect to one another?

That is where the risk accumulates.

A service account gets created for a project and never removed. A contractor keeps access after the engagement ends. A SaaS integration is granted broad permissions because it was faster than scoping them properly. A cloud role inherits access from another role. A machine identity is exempted from the controls applied to human users. An AI agent is connected to systems before the governance model is fully understood.

None of these decisions may look catastrophic in isolation. Most of them happen for reasonable business reasons. Teams are moving quickly. Applications need to connect. Data needs to flow. Employees need to work. Automation needs to run.

But attackers do not experience the environment as isolated decisions. They experience it as a graph of trust.

That is what makes identity-based attacks so effective. The attacker does not need to understand the company the way an org chart describes it. They only need to understand the paths between identities, systems, and data. If one identity gives them access to another system, and that system gives them access to another dataset, the business logic behind those connections is irrelevant. The path exists.

And if the path exists, an attacker can use it.

What Security Leaders Are Realizing

This is why many security leaders are starting to ask a different set of questions than they were five years ago. The old questions still matter: who has access, who approved it, and when was it last reviewed? But they are no longer enough.

The better questions are more contextual. Which identities are overprivileged relative to what they actually do? Which service accounts have accumulated access no human would ever be allowed to keep? Which machine identities can reach sensitive systems? Which third-party integrations have permissions that no one has reviewed in months? Which AI agents are beginning to act with privileges inherited from the humans or systems that created them? Which trusted relationships would become dangerous if a single credential were compromised?

Those are not just compliance questions. They are security questions.

They are also difficult questions to answer with legacy identity tooling. Traditional IAM and IGA systems were built around granting access, removing access, and proving to auditors that access was reviewed. That work remains important, but it was not designed for the speed, complexity, and adversarial pressure of the current environment.

The question is no longer only whether an identity is authorized. The question is what becomes possible once that identity is trusted.

That is exactly what the ShinyHunters playbook keeps demonstrating. The breach begins with access. The damage comes from what that access can reach.

What Organizations Need To Do Differently

The lesson from ShinyHunters is not that organizations need more security tools. It is that they need a better understanding of trust.

The challenge with identity-based attacks is that the attacker rarely starts with their final objective. They start with a foothold. A credential. A service account. A contractor account. A SaaS integration. An API token. Then they follow the trust relationships that already exist inside the environment.

That means organizations need to focus on four things:

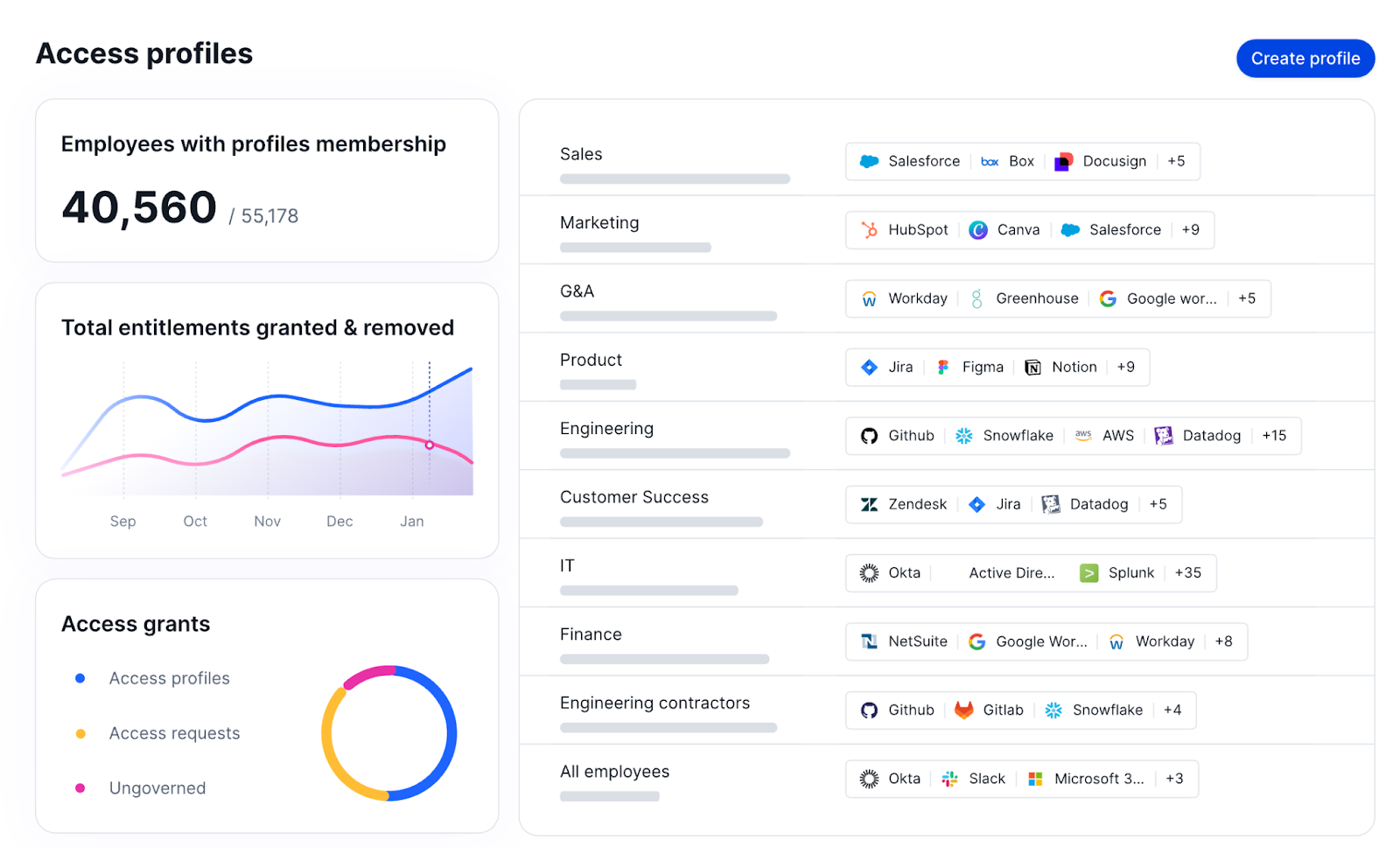

- Inventory every identity. Human identities are only part of the attack surface. Service accounts, machine identities, third-party integrations, cloud workloads, and AI agents all create access paths that need modern IGA solutions.

- Continuously evaluate access. Knowing who has access is no longer enough. Organizations need to understand whether access is still needed, whether it is being used, and whether the level of privilege matches the risk.

- Understand attack paths. Attackers do not think in accounts. They think in pathways. An identity that appears low risk on its own can become high risk when combined with the systems, applications, and data it can reach.

- Treat identity governance as continuous. Modern environments change too quickly for periodic reviews to be the primary control. Trust relationships need to be evaluated continuously as identities, permissions, and systems evolve.

The goal is not to eliminate trust. The goal is to understand it well enough that attackers cannot exploit it first.

Why We Started Linx

This shift is one of the reasons we started Linx. Not because another attacker group made the news, and not because fear is a compelling business strategy. We started Linx because we saw the enterprise changing faster than the identity tools designed to secure it.

The challenge facing security teams today is not simply managing identities. It is understanding trust across an environment that has become dramatically more complex over the last decade. Human identities are only one part of the equation. Organizations now need to govern machine identities, service accounts, cloud workloads, SaaS applications, APIs, third-party integrations, contractors, vendors, and AI agents. Every one of those identities creates access. Every access decision creates a relationship. Every relationship changes the shape of the attack surface.

Legacy identity governance was built for a world where periodic review was enough. Modern identity security requires continuous understanding. It requires knowing not only who has access, but why they have it, whether they still need it, what risk it creates, and what an attacker could do with it.

That is the problem Linx is focused on solving.

The lesson from ShinyHunters is not that attackers are becoming unstoppable. It is that attackers are following the architecture we have built. And the architecture we have built increasingly runs on identity.

What Comes Next

I think the next decade of security will be defined by a simple idea: organizations will need to continuously validate trust across their environments, not just authenticate it once.

Historically, identity programs have focused on granting access, removing access, and periodically reviewing access. That model was built for a world where identities were relatively static and environments changed slowly. Today’s environments are dynamic. Permissions change constantly. New identities are created every day. Machine identities, AI agents, and automated workloads are introducing entirely new categories of access that most organizations are still learning how to govern.

The companies that succeed will not necessarily be the ones that react fastest to the next breach. They will be the ones that understand their trust relationships deeply enough to identify and eliminate attack paths before attackers can exploit them.

That is the future we are building toward at Linx.

And if the last few years of ShinyHunters headlines have taught us anything, it is that the industry is moving there whether we are ready or not.

At Linx Security, we help organizations build robust identity security that addresses each stage of the attack chain. Book a demo with one of our engineers to learn more about how we can keep your systems safe from identity breaches.

The Complete UAR Checklist: How to Automate Access Certifications and Strengthen Identity Security

If your organization runs user access reviews, you already know the pain. Spreadsheets, manual exports, managers rubber-stamping hundreds of permissions in a single afternoon, audit evidence scattered across email threads. The process is supposed to enforce least privilege and satisfy auditors but in most organizations, it does neither well.

This post covers what an effective access review program actually looks like in practice, how to automate it, and how to fix the most common failure modes. If you came here for a checklist, you can download the complete user access review checklist here.

What Is a User Access Review?

A user access review (UAR) — also called an access certification, entitlement review, or certification campaign — is the process of periodically validating who has access to which systems and data, and whether those permissions are still appropriate. Organizations run them to enforce least privilege, meet compliance requirements, and reduce the risk of unauthorized access.

Common Mistakes in User Access Review Programs

Most UAR programs fail for the same handful of reasons:

- Identity fragmentation. Dozens of SaaS apps, multiple clouds, and on-prem AD, but no single source of truth tying them together. Answering "who has access to what" takes days, not minutes.

- Manual data collection. Exporting user lists, reformatting them, and chasing managers via email is slow, error-prone, and a poor use of security team resources.

- Reviewer fatigue. Managers handed spreadsheets of hundreds of permissions with no context will rubber-stamp them. Every time.

- Delayed remediation. Even when risky permissions are correctly flagged, removing those permissions requires coordination across teams and manual ticketing. Identified risk stays open for weeks.

- Poor documentation. Auditors want to see specific decisions and rationales, not "the review was completed." Email threads and ad-hoc spreadsheets don't hold up.

User Access Review Checklist

What Your UAR Process Should Cover

A complete UAR program follows six stages. If any of these are missing or poorly executed, that's where risk accumulates and where auditors find gaps.

Planning & Scoping. Define which systems, applications, and data repositories are in scope before anything else. Prioritize by sensitivity, with financial systems, customer data, and privileged infrastructure first. Confirm which compliance frameworks apply and what they require.

Data Collection. Pull current access lists from all in-scope systems to get a clean, consolidated snapshot of who has access to what, at what permission level. This means normalizing disparate permission formats, flagging orphaned accounts and terminated users, and attaching context (last activity, business role, usage patterns) to every record before review begins.

Reviewer Assignment. Assign the right reviewer to every access record based on system ownership, management hierarchy, or data sensitivity. Ensure no record goes unassigned, set clear deadlines, and brief reviewers on what is expected: explicit Approve/Deny decisions, not passive acknowledgment.

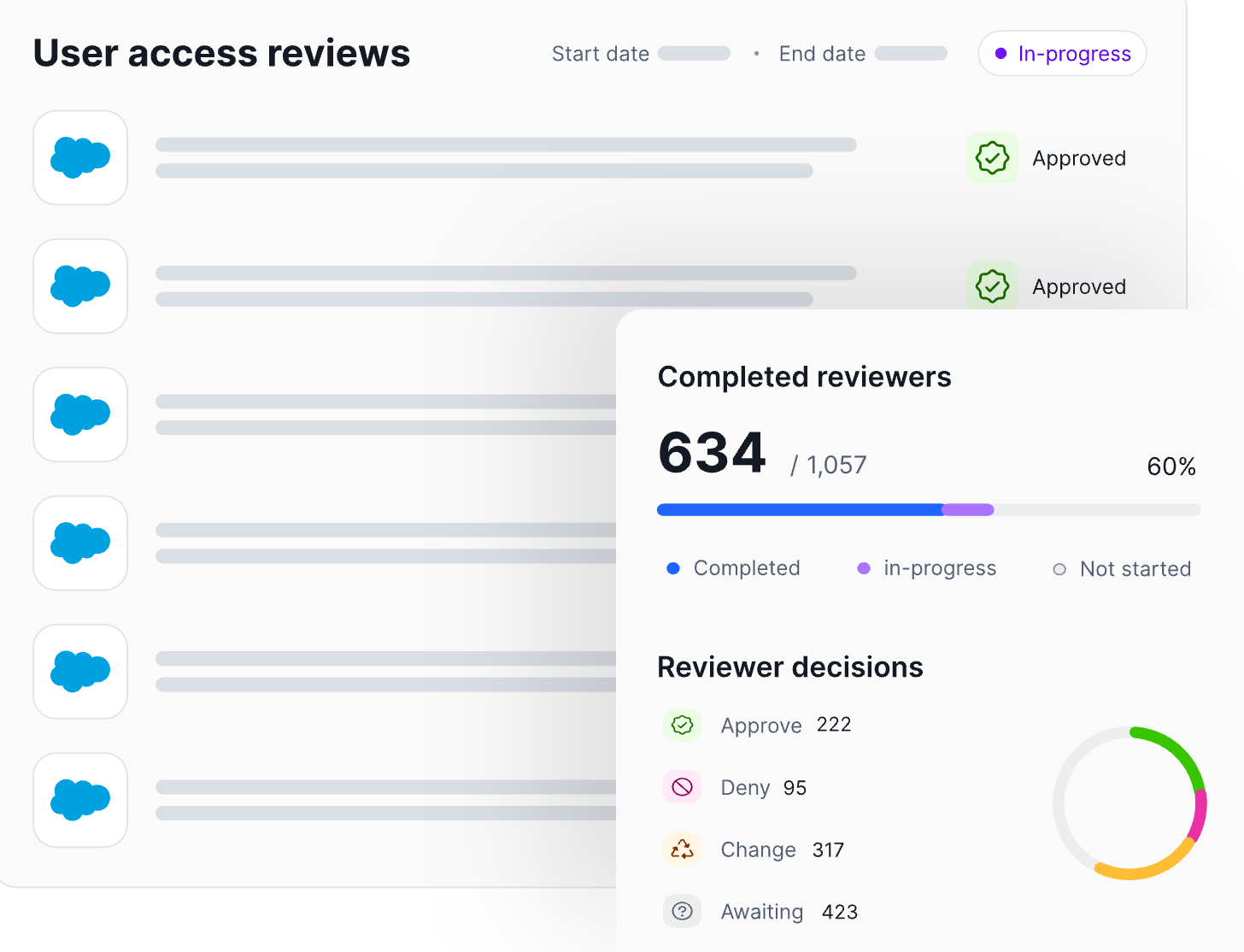

Access Certification & Remediation. Reviewers approve or revoke access, and failing to reach a 100% review rate often results in having to restart the process, so be sure to reach 100%. Ways to streamline this stage include flagging the highest-risk permissions first, providing context-rich recommendations to prevent rubber-stamping, and sending reminders to keep the process on schedule.

Documentation & Evidence Collection. Capture a full audit trail: who reviewed what, when decisions were made, and what was revoked. Every decision needs a timestamp, a reviewer identity, and a rationale, stored in an immutable format that an auditor can reconstruct on demand.

Reporting & Continuous Improvement. Formally close the cycle with a summary report covering scope, completion rate, access modified or revoked, and remediation times. Use the findings to identify bottlenecks, address rubber-stamping patterns, and refine the next cycle toward a more automated, continuous review model.

Download the complete UAR Checklist →

Ways to Automate Access Reviews

Automation is the highest-leverage improvement available to most UAR programs. Here's what it looks like at each stage:

Continuous data collection. Automated platforms aggregate identity and permission data across all connected environments in real time, eliminating manual exports and ensuring reviewers always see current data. This includes automatically surfacing known bad access — orphaned accounts, dormant privileges, and accounts belonging to terminated users — as well as unknown bad access, such as permissions that deviate significantly from peer baselines and wouldn't be caught without behavioral analysis.

AI-driven risk prioritization. Machine learning ranks entitlements by risk, surfacing the permissions most likely to represent a genuine threat. Reviewers focus on what matters instead of weighing every record equally. AI serves as a "bridge" to solving the rubber-stamping problem and increasing review speed.

Context-enriched reviewer workflows. Reviewers receive pre-enriched access lists with last activity, business role, and AI-generated recommendations, replacing hours of cross-referencing with immediate, actionable insight.

API-driven remediation. When access is denied, revocation triggers automatically via API. No tickets, no lag, no waiting for IT to action the change.

Continuous compliance evidence. Every decision is logged, timestamped, and rationale-tagged in an immutable audit trail. Audit readiness becomes a permanent state rather than a quarterly fire drill.

How to Stop Rubber-Stamping in Access Reviews

Rubber-stamping happens when reviewers are overwhelmed by volume and lack the context to make real decisions, so they approve everything. It is the single biggest threat to UAR program validity, and it cannot be solved by reminding managers to "be more thorough."

The fix is structural:

- Reduce volume by filtering. Show reviewers only the permissions that genuinely warrant scrutiny — high-risk, anomalous, or out-of-pattern entitlements. Hide the rest.

- Enrich every decision with context. Last activity, business role, peer comparison, and a clear recommendation. A reviewer with context makes decisions in seconds; without it, they default to approval.

- Track reviewer behavior. If a manager approves 100% of records in five minutes, that is data worth surfacing.

Eliminate friction. A clean interface with structured Approve/Deny captures decisions faster than a spreadsheet and produces better audit evidence.

How to Create an Audit Trail for Access Certifications

An audit trail isn't a log of reviews completed — it's a record of every decision, with full context, that an auditor can reconstruct months or years later.

A defensible audit trail requires:

- Per-decision logging. Every Approve or Deny captured with a timestamp, reviewer identity, and rationale.

- Immutable storage. Decisions can't be retroactively edited or lost in an email thread.

- Full chain reconstruction. For any access change, you can show who requested it, who approved it, when it was actioned, and what risk score it carried at the time.

- Continuous, not retrospective. The audit trail is generated automatically as decisions are made, not assembled the week before an audit.

Manual processes can technically produce all of this. They almost never do.

Get the Complete Access Review Checklist

The best practices above point you in the right direction. The checklist gives you the full roadmap — every step of the UAR lifecycle, what good looks like, and how to automate it.

User Access Review Best Practices

The highest-leverage improvements to any UAR program:

- Start with visibility. You cannot review what you cannot see. Unified, continuously updated identity data is the prerequisite for everything else.

- Enrich before you review. Context-rich, risk-ranked lists produce real decisions. Raw permission lists produce rubber-stamping.

- Automate identity risk remediation, not just collection. The security benefit is only realized when identified risk is closed automatically.

- Treat documentation as a byproduct. The audit trail should generate itself as decisions are made.

- Measure what matters. Detection-to-remediation time, approval rate, and percentage of rights modified — not just completion rate.

Automate Your Reviews with Linx Security

Linx Security is a next-generation modern identity governance platform purpose-built to make user access reviews continuous, intelligent, and measurable. With a graph-powered view of every human and non-human identity in your environment, and AI-driven workflows to act on what it surfaces, Linx handles the full UAR lifecycle in one platform.

What takes most teams weeks takes Linx days.

See Linx in action: book a demo at linx.security/demo

Frequently Asked Questions

What is the difference between a user access review and an access certification?

Access certifications are the formal, compliance-driven step of certifying access, often tied to frameworks like SOC 2 or SOX, while user access reviews is a broader term covering the full lifecycle. In practice the terms are often used interchangeably. Other industry terms for the same general practice include entitlement reviews, certification campaigns, and permission audits. The goal is the same regardless of terminology: ensure permissions are appropriate, minimal, and current.

How do you automate user access reviews?

By using an identity governance platform that continuously aggregates permission data, enriches it with risk scores and context, delivers structured workflows to reviewers, and triggers automatic remediation on denial. Automation removes the manual exports, ticketing, and chasing that slow traditional reviews down.

How often should UARs be performed?

Most frameworks require quarterly reviews for sensitive systems and annual reviews for lower-risk apps. Mature programs are shifting to continuous monitoring, where anomalies trigger reviews in real time rather than on a fixed cadence.

What are the most common UAR mistakes?

Reviewing without context, relying on manual data collection, failing to automate remediation, and treating documentation as a separate task rather than a byproduct of the review process.

What is the principle of least privilege (PoLP) and how does it relate to access reviews?

Least privilege means every user has access only to what they need and nothing more. Access reviews are the primary mechanism for identifying and revoking the excess permissions that accumulate over time as roles change.

What compliance frameworks require UARs?

SOC 2, ISO 27001, HIPAA, SOX, and PCI DSS all require some form of periodic access review or certification. Demonstrating that permissions are actively managed and promptly remediated is a baseline expectation across nearly every major framework.

What is reviewer fatigue and how do you prevent it?

Reviewer fatigue is when managers are so overwhelmed by review volume that they approve everything without scrutiny. The fix is filtering to high-risk records only, enriching each one with context and recommendations, and removing friction from the decision interface.

What is just-in-time access and how does it improve UARs?

Just-in-time (JIT) access grants permissions only for the duration of a specific task, then automatically revokes them. It prevents standing privileges from accumulating in the first place, shrinking both the attack surface and the review workload.

How do you measure the effectiveness of a UAR program?

Completion rate is the most-tracked metric but the least meaningful. Better indicators: detection-to-remediation time, reviewer approval rate, and percentage of access modified or revoked per cycle.

The State of IGA in 2026

If you’re evaluating identity governance and administration (IGA) solutions in 2026, you already know that the average enterprise has more non-human identities than human ones. At the same time, identity-related breaches continue to rise, and the traditional process of manually reviewing access or defining static roles is simply too slow. A strong IGA platform should handle all of these challenges out of the box.

In this article, we’ll explore the top 10 IGA tools to consider, organized by category, so you can quickly identify what type of platform suits your organization.

IGA Tools Comparison

Modern IGA Platforms

Modern IGA solutions automate the full identity lifecycle and continuously enforce the principle of least privilege across human and non-human identities.

1. Linx Security

At a Glance

Founded: 2023

Headquarters: New York, New York

Category: AI-native IGA & Identity Security

Deployment model: SaaS (cloud-native)

Customer rating: 5/5 on Gartner Peer Insights

Linx - Best for AI-Driven Identity Security and Governance

Linx is best for organizations that want a modern, AI-native IGA solution with fast deployment, real-time governance, and strong automation across both human and non-human identities.

Description and Features

Linx is an AI-native platform that combines deep identity visibility, automated governance, and continuous security enforcement into a single product. At its core is the Linx Identity Graph, which normalizes and correlates data across human, non-human, and agentic identities, mapping the full access path from identity to resource.

The Linx Identity Graph empowers you to make informed decisions: You understand, at a glance, who had access, how they gained it, whether they used it, and the blast radius in the case of a compromise. With a single click, you can remediate the root cause of an issue straight from the Identity Graph.

Linx gives you this full visibility into your identity environment by pulling data from every application and system you use. This full coverage is thanks to an extensive library of out-of-the-box connectors, which also include legacy and on-premises systems that many competitors overlook.

Linx also offers automated access review and remediation workflows that continuously evaluate entitlements and detect drift. When access needs to be adjusted or even revoked, remediation happens directly inside the platform: You don’t need any ticketing loops or manual intervention.

Additionally, Linx has introduced Autopilot, the first AI agent built for identity security and governance. Unlike AI systems that only operate on demand, Autopilot monitors identity environments 24/7, detects changes in real time, evaluates risk in context, and takes action to remediate issues. With Autopilot, you get an always-on, autonomous coverage that eliminates the manual work of chasing access reviews, freeing up security teams to focus on implementing new features rather than firefighting.

The bottom line? With Linx, you get a single solution that covers visibility, governance, lifecycle automation, and identity security without the complexity and cost of legacy IGA vendors.

Pros

- Combines IGA and identity security posture management (ISPM) in one platform.

- AI-native architecture built from the ground up — not a legacy platform with AI bolted on.

- Well-suited for the modern era with strong support for non-human and agentic identities, quick deployment, and industry-leading time-to-value.

- Identity Graph provides unified, real-time visibility across human, non-human, and AI agent identities in a single view.

- Autopilot performs autonomous remediation, not just recommendations. It detects, evaluates, and acts without requiring human intervention.

- In-platform remediation eliminates ticketing loops and manual handoffs.

- Clean, non-technical UI makes the platform accessible to GRC and security personas without developer involvement. No query language needed.

Cons

- Smaller connector library and SI partner ecosystem than legacy IGA leaders.

- On-premises application support is more limited than platforms like SailPoint.

- Fewer community resources, public documentation, and third-party implementation partners.

- As a newer company, Linx has been recognized by Forrester but doesn’t have the same analyst recognition as some of the others.

2. Veza

At a Glance

Founded: 2020

Headquarters: Los Gatos, California

Category: Identity Security

Deployment model: SaaS (cloud-native)

Customer rating: 4.8/5 on Gartner Peer Insights

Veza - Best for Permissions-level Access Visibility

Veza is best for security teams that need deep visibility into permissions and authorization across cloud and data systems, especially across complex data environments like Snowflake, AWS, and custom applications.

Description and Features

Veza's Access Graph maps an organization’s entire identity ecosystem, with a deep focus on data and infrastructure. This approach makes Veza strong for access visibility and least-privilege enforcement.

Recently, Veza has introduced Access Agents, which are AI agents designed for governance tasks. Veza has also invested in AI agent security to provide visibility into MCP servers, AI agent permissions, and LLM infrastructure.

Pros

- Access Graph delivers the most granular permissions visibility in this category — mapping down to specific data objects, tables, and resources, not just users and groups.

- Exceptionally strong for data system governance across Snowflake, databases, and cloud infrastructure.

- 300+ integrations covering cloud, SaaS, and custom environments.

- AI Agent Security product provides visibility into MCP servers, AI agent permissions, and LLM infrastructure.

- Recognized in the 2025 Gartner Market Guide for Identity Governance and Administration.

Cons

- Acquired by ServiceNow in December 2025, meaning product roadmap, pricing, and support structure are subject to change.

- Veza has no true in-platform remediation, meaning it can surface risk but cannot execute remediation without leaving the platform.

- Traditional IGA workflows — access requests, lifecycle management, provisioning automation — are recent additions, not core strengths.

- Less mature for end-to-end IGA compared to vendors built around the full lifecycle from day one.

(Note: ServiceNow acquired Veza in December 2025)

3. Lumos

At a Glance

Founded: 2020

Headquarters: San Francisco, California

Category: SaaS Management IGA

Deployment model: SaaS (cloud-native)

Customer rating: 4.6/5 on Gartner Peer Insights

Lumos - Best for SaaS Access Automation

Lumos is best for mid-market companies focused on automating access requests and approvals across SaaS apps, especially those prioritizing employee self-service and productivity.

Description and Features

Lumos is a modern IGA platform that offers real-time visibility into enterprise SaaS ecosystems and allows companies to automate access requests through channels like Slack. When you connect Lumos to your organization’s cloud applications, it can check and map all user permissions and simplify access requests through a self-service portal.

Pros

- Strong SaaS access automation and self-service workflows.

- Access reviews surface only what has changed since the last cycle, reducing reviewer fatigue.

- Self-service access requests through Slack reduce IT tickets without sacrificing governance.

- Intuitive user experience for business users and employees.

Cons

- Lumos is built around what the IdP knows, not deep, fine-grained entitlements inside each app, resulting in a shallow data model.

- Lumos was built as a SaaS management platform, so support for NHIs and agentic identities is weak compared to competitors.

- Fewer connectors for legacy and on-prem systems compared to enterprise-focused competitors.

- Not well-suited for organizations with complex regulatory compliance requirements or deep ERP governance needs.

4. Opal Security

At a Glance

Founded: 2020

Headquarters: San Francisco, California

Category: Modern IGA & Authorization

Deployment model: SaaS (cloud-native)

Customer rating: Not yet listed

Opal Security- Best for Just-in-Time Access Governance

Opal Security is best for engineering-heavy organizations that want granular, real-time access governance with deep developer tooling integrations and just-in-time access controls.

Description and Features

Opal Security is an authorization reasoning platform with an intelligent data layer that continuously analyzes access behavior across cloud, SaaS, and on-prem environments. Developer-native integrations and a 2025 Risk Layer for AI agent governance round out the platform.

Pros

- Just-in-time access controls convert standing privileges to time-bound grants.

- Developer-native integrations with Terraform, Slack, Jira, PagerDuty, and GitHub make Opal a natural fit for engineering-led security teams.

- AI agent governance is purpose-built.

- In-platform remediation eliminates ticketing loops and manual handoffs.

- Equal support for human and non-human identities.

Cons

- Smaller connector library compared to established enterprise IGA vendors like SailPoint or Saviynt.

- Traditional IGA workflows, including full lifecycle management, ERP SoD enforcement, complex compliance reporting, are less mature than purpose-built governance platforms.

- Not well-suited for organizations with significant on-premises or legacy infrastructure.

- Opal has a smaller partner ecosystem and fewer third-party implementation resources than legacy IGA leaders.

Legacy IGA Platforms

Legacy IGA platforms are traditionally on-premises identity governance tools that rely heavily on manual workflows and static roles. They usually need dedicated teams to deploy and manage them.

5. SailPoint

At a Glance

Founded: 2005

Headquarters: Austin, Texas

Category: Enterprise IGA

Deployment model: SaaS + Hybrid

Customer rating: 4.8/5 on Gartner Peer Insights

SailPoint - Best for Heavy On-prem Enterprises

SailPoint is best for large enterprises in regulated industries that need a battle-tested IGA platform with a mature SI partner ecosystem and the flexibility to run cloud, on-prem, or both.

Description and Features

SailPoint offers AI-powered access reviews, a broad library of connectors, and lifecycle automations. Its strengths are scale and depth, and it can help you govern tens of thousands of identities across a complex hybrid environment.

Pros

- Market leader with 20 years in enterprise IGA and a Gartner Magic Quadrant Leader designation.

- Broad connector library spanning thousands of integrations across SaaS, cloud, and on-prem systems.

- Flexible deployment: SaaS (Identity Security Cloud) and on-prem (IdentityIQ) options supported.

- Large system integrator partner ecosystem for complex global deployments.

- Agent Identity Security product extends governance to AI agents operating in Salesforce, ServiceNow, Snowflake, and more.

Cons

- Implementations are notoriously complex: often 12+ months to reach maturity, with professional services costs that can triple the initial software price.

- Designed for large enterprises with dedicated IAM teams — mid-market organizations often find it oversized and expensive.

- UI is widely considered dated compared to modern cloud-native competitors.

- IdentityIQ and Identity Security Cloud have different feature sets, creating governance gaps for organizations running both simultaneously.

6. Saviynt

At a Glance

Founded: 2005

Headquarters: El Segundo, California

Category: Cloud-first IGA

Deployment model: SaaS

Customer rating: 4.8/5 on Gartner Peer Insights

Saviynt - Best for ERP-heavy Organizations

Saviynt is best for enterprises looking to consolidate IGA, PAM, and Application Access Governance into a single platform, particularly those running complex ERP environments like SAP or Oracle that require strong Separation of Duties enforcement.

Description and Features

Saviynt is a cloud-native IGA platform that provides identity governance and cloud infrastructure entitlement management (CIEM) in a single solution. It has machine learning capabilities, and its built-in IdentityBot RPA engine automates provisioning tasks. It’s a good choice if you want a platform that covers IGA, PAM, and CIEM without having to buy three separate tools.

Pros

- Converges IGA, PAM, and Application Access Governance into a single platform, eliminating the need to buy and integrate separate tools.

- Out-of-the-box SoD rulesets for SAP, Oracle, Workday, Salesforce, and NetSuite, which is a significant advantage for ERP-heavy organizations.

- Five consecutive Gartner Peer Insights Customers' Choice recognitions — the only vendor in this category with that distinction.

- Available on AWS Marketplace for simplified procurement.

Cons

- Steep learning curve and complex initial setup — typically requires a dedicated IAM team.

- Standard contracts are typically structured as three-year commitments.

- Support responsiveness can be inconsistent, particularly during issue resolution.

- Licensing SKU changes have created confusion and unexpected feature gaps for existing customers.

7. Omada

At a Glance

Founded: 2000

Headquarters: Copenhagen, Denmark

Category: IGA

Deployment model: SaaS + On-prem

Customer rating: 4.6/5 on Gartner Peer Insights

Omada - Best for Compliance-heavy Organizations

Omada is best for European enterprises and organizations with strict GDPR, NIS2, or cross-border data residency requirements that need deep hybrid environment support and a strong implementation track record.

Description and Features

Omada Identity Cloud’s best features are code-free configuration, AI-powered analytics, and role-based access control. Omada can be a good choice for mid-to-large companies that need a structured, compliance-focused IGA solution with strong support for hybrid environments.

Pros

- Founded in 2000, meaning Omada has one of the deepest track records in enterprise IGA, with proven deployments in complex hybrid environments.

- Code-free configuration reduces dependency on developers for workflow and policy changes.

- Cloud Accelerator package offers a guaranteed 12-week implementation at a fixed cost, which is rare for IGA vendors.

- Strong European presence with deep expertise in GDPR, NIS2, and cross-border compliance requirements.

Cons

- Smaller community, fewer public knowledge base resources, and fewer third-party integration partners than SailPoint or Okta.

- Feature discovery is not always intuitive — some capabilities are buried under non-obvious menu labels.

- Implementation still requires a meaningful lift; initial performance can lag before the system is fully tuned.

- Troubleshooting import errors and single identity issues can be difficult for administrators.

- Less momentum in North America compared to European markets.

Identity and SaaS Governance Platforms

Identity and SaaS governance platforms prioritize fast deployment and visibility, but they often fall short of full lifecycle management.

8. Okta Identity Governance

At a Glance

Founded: 2009

Headquarters: San Francisco, California

Category: IGA (add-on module to Okta platform)

Deployment model: SaaS

Customer rating: 4.2/5 on Gartner Peer Insights

Okta Identity Governance - Best for Existing Okta Customers

Okta Identity Governance is best for companies already using Okta that want to extend their IAM platform into lightweight IGA with minimal additional tooling.

Description and Features

Okta Identity Governance (OIG) extends Okta’s core identity platform. It leverages Okta’s existing directory and SSO integrations to add lifecycle management, periodic reviews, and audits to verify who has access to what without requiring a separate IGA deployment.

Pros

- The only vendor on this list with publicly listed pricing (~$4/user/month as a standalone add-on; ~$17/user/month in the full Essentials bundle).

- Lifecycle Management, Workflows, and Access Governance share the same data model and admin experience as core Okta.

- Fast time to value for organizations already running Okta as their IdP.

- Strong pre-built integrations across the modern SaaS stack.

Cons

- Not a viable standalone IGA platform — value is almost entirely dependent on existing Okta adoption.

- Limited advanced SoD controls and granular policy engines compared to dedicated IGA vendors.

- Governance capabilities thin out significantly for non-SaaS, hybrid, or on-prem environments.

- Not well-suited for complex regulatory compliance use cases requiring deep entitlement modeling.

9. CyberArk Identity Security Platform (Zilla)

At a Glance

Founded: 1999 (CyberArk) / 2019 (Zilla)

Headquarters: Petach Tikva, Israel

Category: PAM + IGA

Deployment model: SaaS + Hybrid

Customer rating: 4.8/5 on Gartner Peer Insights

CyberArk Identity Security Platform - Best for PAM-first Orgs Expanding into IGA

CyberArk Identity Security Platform is best for organizations that already rely on CyberArk for privileged access management and want to extend modern IGA capabilities through the same platform rather than buying a standalone tool.

Description and Features

CyberArk is known for its robust privileged access management (PAM) capabilities and has expanded to offer broader identity security. CyberArk can help you secure your high-risk credentials (enforcing just-in-time access and recording privileged sessions), and it also provides features like adaptive MFA and identity lifecycle management.

Pros

- Modern IGA via the Zilla acquisition.

- 1,000+ integrations spanning cloud, SaaS, and on-prem environments.

- Just-in-time access with zero standing privileges reduces attack surface across both PAM and IGA workflows.

- AI Profiles capability automates role management using machine learning.

Cons

- Acquired by Palo Alto Networks in February 2026, meaning the product roadmap and pricing are subject to change.

- IGA capabilities are newer and less mature than dedicated IGA platforms. In particular, access request workflows have gaps.

- UI is considered dated by many users compared to modern cloud-native alternatives.

- Platform upgrade stability has been flagged as a concern in user reviews.

- Best fit is PAM-first organizations — pure IGA buyers may find the platform oversized and expensive for their needs.

Note: Palo Alto Networks acquired CyberArk in February 2026.

10. Zluri

At a Glance

Founded: 2020

Headquarters: Milpitas, California

Category: SaaS Management + IGA

Deployment model: SaaS (cloud-native)

Customer rating: 4.6/5 on Gartner Peer Insights

Zluri - Best for Mid-Market SaaS Management

Zluri is best for mid-market companies looking for a simple, SaaS-first IGA solution with strong SaaS discovery and application management capabilities, without the overhead of an enterprise-grade IGA deployment.

Description and Features

Zluri is a SaaS management and identity governance platform that uses its discovery engine to surface all applications in your environment, including shadow IT. This comprehensive visibility enables IT and security teams to see exactly which tools are being accessed and by whom, providing a strong foundation for governance and cost optimization.

Pros

- Nine-method discovery engine surfaces all applications in an environment, including shadow IT, which is one of the most comprehensive SaaS visibility approaches in this category.

- IGA and SaaS spend management in one platform. Access governance and license cost optimization are addressed together.

- Sub-hour JML processing means that new hire provisioning and offboarding happen in minutes, not batch cycles.

- Supports access reviews across multiple IdPs (Azure AD, Google Workspace, Okta, JumpCloud) simultaneously.

- Well-suited for mid-market organizations that want SaaS control without enterprise-grade complexity.

Cons

- Policy enforcement and compliance capabilities are less mature than dedicated IGA platforms.

- Feels more like a SaaS management tool with governance features than a governance platform with SaaS management — an important distinction for compliance-driven buyers.

- Not well-suited for organizations with complex regulatory mandates, deep SoD requirements, or significant on-prem infrastructure.

- The feature set is still maturing for enterprise-scale IGA use cases.

Frequently Asked Questions

What is the difference between modern IGA and legacy IGA?

Modern IGA platforms are cloud-native, AI-driven systems that continuously govern identity access in real time, while legacy IGA platforms are on-premises tools built for periodic, manual governance. Legacy platforms rely on scheduled access reviews, manual provisioning, and dedicated engineering teams. Modern IGA replaces that model with continuous monitoring and automated remediation that scales across human, non-human, and AI agent identities, with faster deployment and lower total cost of ownership.

Which IGA platforms support AI agent governance?

Several IGA platforms have introduced AI agent governance capabilities, including Linx, Veza, Opal Security, SailPoint, Saviynt, and CyberArk. Linx governs AI agents and offers continuous drift monitoring. Veza (now part of ServiceNow) provides visibility into MCP servers and LLM infrastructure. Opal Security has introduced a Risk Layer specifically for agentic authorization requests and ships a native MCP server for AI-driven access automation. SailPoint has extended governance to AI agents in Salesforce, ServiceNow, and Snowflake. Saviynt and CyberArk have expanded non-human identity coverage to include agent credentials.

What is the difference between SaaS IGA and on-premises IGA?

SaaS IGA is a cloud-hosted service managed by the vendor; on-premises IGA is software installed and maintained on your own infrastructure. Most modern IGA vendors have moved exclusively to SaaS; legacy platforms like SailPoint IdentityIQ remain available on-premises for organizations with strict data sovereignty requirements.

Does my company need IGA if we already use Okta or Microsoft Entra?

Okta and Microsoft Entra are identity providers that handle authentication and basic lifecycle management, but they are not full identity governance platforms. IGA addresses a complementary set of problems: enforcing least privilege, automating access reviews, managing separation of duties, and governing non-human identities. Both Okta and Microsoft offer governance add-ons, but organizations with hybrid infrastructure, complex compliance requirements, or applications outside those ecosystems typically need a purpose-built IGA platform.

Which IGA platforms are best for mid-market companies?

For mid-market organizations, the best-fit platforms prioritize fast deployment and low operational burden: Lumos, Zluri, Linx, and Opal Security are strong options that deliver value without a dedicated IAM team.

Which IGA platforms are best for large enterprises?

For large enterprises in regulated industries, SailPoint, Saviynt, and Linx offer the compliance automation, ERP integration, and hybrid environment support that complex organizations require.

Do IGA platforms require professional services to deploy?

It depends on the platform and the complexity of your environment. Legacy platforms like SailPoint IdentityIQ almost always require vendor-led or partner-led professional services, with implementations taking 6 to 12 months and services costs that can match or exceed the software license. Modern cloud-native platforms like Linx, Lumos, and Zluri are designed to reduce or eliminate that dependency. When evaluating vendors, ask whether professional services are required or optional and whether implementation costs are included in the platform fee.

What are the top IGA tools in 2026?

The top IGA tools in 2026 fall into three categories. Modern platforms include Linx Security, Lumos, Veza (now part of ServiceNow), and Opal Security. Established enterprise platforms include SailPoint and Saviynt, which offer deep compliance automation at the cost of implementation complexity. Okta Identity Governance, CyberArk (which acquired Zilla Security in 2025 and was acquired by Palo Alto Networks in 2026), Omada, and Zluri round out the category with strengths in ecosystem integration, privileged access, European compliance, and SaaS management respectively.

What should I look for when evaluating IGA vendors?

The most important factors when evaluating IGA vendors are deployment speed, AI capability, connector coverage, and total cost of ownership. Ask whether the platform requires professional services or can be deployed by your internal team. Distinguish between AI-native platforms and those with AI bolted onto a legacy system. Get the full TCO picture including licensing, implementation, and any features charged separately. Lastly, analyst recognition from Gartner or Forrester provides a useful independent quality signal.

Conclusion

In 2026, the direction of the IGA market is clear: Speed, AI-native automation and augmentation, in-platform remediation, and out-of-the-box integrations are now non-negotiable. The best modern IGA tools combine these features with full visibility and intuitive identity lifecycle management.

This is where Linx Security leads the pack. Linx provides full identity governance and immediate time-to-value through its zero-configuration connectors across cloud, SaaS, and on-prem environments. It’s purpose-built for ease of use: You don’t need professional services to deploy or operate Linx.

Better yet, Linx offers round-the-clock, AI-driven coverage so that no identity issues fall through the cracks. Linx Security’s Autopilot continuously analyzes identity risks and auto-remediates policy violations before they become security incidents.

If you are reviewing IGA vendors, read this blog to understand what are the 10 questions you need to ask when evaluating IGA solutions.

At the same time, if you’re looking for an IGA platform that checks all of the boxes, book a demo with Linx Security to experience what an industry-leading IGA can do.

We Shipped Autopilot 10 Weeks Ago. Here's the Unexpected Thing Customers Want

We shipped Autopilot 10 weeks ago. Autopilot is our autonomous AI agent for identity governance, designed to continuously monitor identity environments, evaluate risk in context, and take action without waiting for human review.

Since then, what's surprised me most isn't about the product. It's about what enterprise security leaders actually want from autonomy, and how dramatically that differs from what the identity industry has been selling them for the last decade.

What follows are notes from inside that learning. Real conversations with the security teams running Autopilot today, plus the CISOs, Heads of IAM, and identity architects evaluating it for the next wave. Across retail, financial services, healthcare, hospitality, and Big Tech. The patterns showed up faster than I expected. They were more uniform than I expected. And one of them surprised me.

The pattern across every conversation

Different industries. Different sizes. Different titles. The same line, in slightly different words.

A CISO at a global financial services firm put it most directly: "I don't need another alert and a warning. I need something to take action."

A senior identity architect at a Fortune 500 retailer framed the other side of the same coin: "I need a log of every action the agent takes, with the reasoning. We still work with auditors, and 'the system decided' isn't a good enough answer."

On the surface those two statements look opposite. One asks for autonomy. The other asks for documentation. But they're the same insight, said from two seats: enterprises want autonomous action, and they want a clean audit trail of every action that gets taken. Autonomy without auditability is a non-starter in any regulated environment. Auditability without autonomy is the status quo we've all been stuck with for a decade.

The thing that's been mis-sold for ten years is that autonomy and accountability are opposites. They're not. They're complementary. Customers are not asking us to choose between "fully automated" and "humans review everything." They're asking for a system that does the work and shows its work, at the same time, every time.

Almost nobody in the legacy identity governance market has built that combination. They've built two products: rules engines that fire alerts, and access reviews that get rubber-stamped quarterly. Neither is autonomous. Neither produces the kind of action-level audit trail a regulated environment can defend. Both are exhausting.

We built Autopilot to do both: take the action, and produce a complete, defensible audit log of every step it took and why. That's the unlock.

The crawl-walk-run shape they're choosing themselves

I expected we'd have to convince buyers to start small with autonomy. To meet them where they were, hand-hold them through a phased rollout, and prove value before unlocking more.

Instead, security teams are articulating the pattern back to us before we pitch it.

A Head of Security at a healthcare enterprise said it clearly: "Trust in autonomy builds over time. So maybe it prompts me first, but as we get comfortable with it, this just needs to run. I've got an agent for that. It just happens."

Almost every conversation followed this shape. Phase 1: the agent investigates, surfaces a recommendation, a human clicks. Phase 2: an admin pre-approves classes of action, and the agent executes. Then, eventually, full autonomy on narrow, well-bounded tasks.

This is buyers leading the architecture, not vendors prescribing it. That's a tell. It means the market has matured past the question of whether autonomy belongs in identity governance and moved to the question of how to operationalize it without losing accountability.

The teams we're working with don't need convincing. They need a credible path. We've built that path into Autopilot from day one.

What buyers are rejecting

Every demo we ran ended in a comparison. Security leaders held Autopilot up against their existing IGA stack, and named what was breaking.

The list rhymes across industries.

Quarterly access reviews are theater. A senior security leader at a global financial data firm asked his team, almost rhetorically, "would you rather have compliance or security?" The framing was sharp because it was honest. Quarterly UAR cycles exist for auditors, not for defenders. Everybody in the room knows it. Nobody in legacy IGA has the architecture to fix it, because their architecture is built around the cycle. Ours isn't.

Rules-based systems produce more alerts, not more action. Several CISOs described arriving at security programs that had ten thousand identity rules and one human staring at the dashboard. The rules weren't wrong. They were just disconnected from the question of who actually had the authority to act on them. Autopilot collapses that gap.

Legacy deployments take years and don't reduce manual work. One enterprise security leader described getting more value in three weeks of running Linx than in three years of running two of the largest legacy IGA platforms combined. I'm not going to name the platforms. The point wasn't that those tools are bad. It's that they were built for a different era of identity, one where humans had time to be in the loop on every decision. That era is over.

One enterprise we engaged with was running five identity products simultaneously. Five. They had to deprovision one because it was disrupting the others. This is what the end-state of "buy more tools" looks like. It's not a security program. It's a tax on the security team. We replace that stack with one platform that does what those five together couldn't do.

These aren't edge cases. They're the pattern.

The agents teams want first

When teams pick their first Autopilot deployment, three patterns dominate.

Admin Drift Monitor. Listens for any access change that elevates someone to admin. Runs a peer comparison and a JIT/access-profile check. Only fires if it finds no justification for the elevation. The reason this one wins is concrete: it produces almost zero false positives, because the bar for "should this human be admin" is exceptionally well-defined inside any mature security program. Teams audit the agent's reasoning easily. They trust it within days. Then they extend it.

UAR Reviewer Classifier. Continuously evaluates entitlements during access review campaigns and pre-recommends approve/deny before the human even opens the review. The pattern: stop asking humans to be the first decision-makers. Make them the second. The human's time is worth far more on the close calls than on the obvious ones.

Access Profile Tuner. The next agent we're shipping. It continuously refines access profiles based on real usage patterns, tightening over-provisioned access automatically and surfacing the gap between what someone has and what they actually use day to day. Same architectural pattern as the first two: narrow scope, accountable action, the human as the second decision-maker, not the first.

What links these three agents is what they aren't. They aren't general-purpose AI assistants. They aren't conversational chatbots. They aren't models that "help you think about identity." They're narrow, accountable, single-purpose agents. They do one thing. They do it well. They show their work.

This is the part of the next 12 months in identity that I think the industry is going to get wrong. The future isn't going to be one giant AI that handles all of identity governance. It's going to be a fleet of narrow agents, each one auditable, each one deployed when the team is ready, each one retired or replaced as the threat shape changes.

The phrase I've started using internally is "the control plane is the agent fleet, not the model." That's the architectural bet behind Autopilot. We've built it that way because the security teams running it are operating that way.

We make autonomy boring. Boring in the way that fire suppression in a data center is boring. Specifically engineered, well-instrumented, mostly invisible, deeply trusted. That's the bar we set for Autopilot, and it's the bar we're meeting.

What this means for the next twelve months

A few predictions, from where I sit 10 weeks in.

The identity governance category is going to fragment along the autonomy axis. Vendors who ship generic "AI-powered" features bolted onto rules engines will lose share to vendors who ship narrow, accountable agents that customers can audit, deploy, and extend. The winners will be the ones who make autonomy explicable, not the ones who make it impressive. We've made our bet, and the market is validating it in real time.

The "control plane" framing is the right one, but it goes beyond agents. Microsoft, Okta, and others are now naming their agent control surfaces. That's a market-defining moment. The deeper truth, though, is that the agent control plane only works if the identity control plane underneath it is unified. You can't govern an agent's actions if you don't have a unified record of every human, machine, service account, and agent identity in your environment. Identity becomes the substrate. The agent layer is the workload. Linx is the only platform built for both.

CISOs are going to keep telling us they want autonomy and verification together. I expect this signal to get louder, not quieter. The boards of the companies our customers serve are starting to ask "are we governable?" instead of "are we secure?" That's a more sophisticated question, and it's going to drive procurement priorities for the next two years. The platforms that answer it well will define the next decade of security.

The bar for what counts as "autonomous identity security" is going to rise quickly. Six months from now, the demo bar will be entirely different from where it is today. The bar is being set by the platforms shipping today, with skin in the game. We are one of them.

Closing

We shipped Autopilot 10 weeks ago. The conversations are different from the ones I had a year ago. Different from six months ago. Different from 10 weeks ago.

The market is moving, and it's moving toward something specific: autonomous identity governance that earns trust by showing its work. That's exactly what we built.

If you're a CISO or Head of IAM thinking about how autonomy fits into your identity program, what to deploy first, how to phase trust, where the audit trail needs to live, we'd be glad to compare notes. The companies that figure this out first won't be the ones who buy the most tools. They'll be the ones who deploy autonomy with discipline. We're doing it now. Come see what 10 weeks of shipping autonomous identity governance actually looks like.

10 weeks in, that's what I'm certain of.

Frequently asked questions

What is autonomous identity security?

Autonomous identity security is the use of AI agents to continuously monitor identity environments, evaluate risk in context, and take action in real time without waiting for human review. It replaces the periodic, alert-driven model of legacy identity governance with a continuous, agent-driven model that operates at machine speed and produces a complete audit trail of every action taken.

What is Linx Security's Autopilot?

Autopilot is Linx Security's autonomous AI agent for identity governance. It runs as a fleet of narrow, single-purpose agents, including Admin Drift Monitor, UAR Reviewer Classifier, and Access Profile Tuner, that each perform a specific identity governance task continuously, with full action logging and customer-readable rationale on every decision. Autopilot is shipping today, with deployments and active engagements across retail, financial services, healthcare, hospitality, and Big Tech.

How is autonomous identity security different from legacy IGA?

Legacy identity governance platforms are built around quarterly access review cycles, rule-based alerting, and human-in-the-loop decision making. Autonomous identity security is built around continuous monitoring, AI-driven contextual risk evaluation, and direct action by accountable agents. Linx Security delivers value in weeks rather than the months or years typically required by legacy IGA platforms.

Which Autopilot agents do customers deploy first?

The two most common first deployments are Admin Drift Monitor, which detects unauthorized administrative privilege elevation and only fires when it finds no business justification, and UAR Reviewer Classifier, which pre-classifies entitlements during access review campaigns to reduce human review time. Access Profile Tuner is the next agent shipping, continuously refining access profiles based on actual usage patterns. Teams typically extend to additional agents over the following 30 to 90 days as trust in the platform builds.

How does autonomous identity governance work with auditors?

Autonomous identity security only works in regulated environments if every action the system takes produces a complete, defensible audit trail. Linx Security's Autopilot logs every action with full reasoning, the data inputs the agent used, and the policy or context that triggered the decision. This satisfies SOC 2, ISO 27001, NIST, and most regulatory frameworks while maintaining continuous autonomous operation.

Who should consider autonomous identity security?

Autonomous identity security is most relevant for CISOs, Heads of Identity and Access Management, and security architects at enterprises with more than 1,000 employees or significant non-human identity sprawl across service accounts, machine identities, AI agents, and contractor access. Companies operating in regulated industries (financial services, healthcare, retail, hospitality) and those running multiple legacy identity tools simultaneously typically see the fastest value from migrating to a single autonomous platform.

User Access Reviews (Access Certifications): Why They Fail and How to Fix Them

Introduction

As identity-based breaches continue to rise and access misuse becomes a primary attack path, organizations rely on User Access Reviews (UARs) to answer two seemingly simple questions: Who can access what, and should they still be able to?

In theory, this is where stale access should be caught. In reality, it often slips by unnoticed.

Consider a common scenario: A sales operations manager moves into a revenue analytics role. Months later, during a quarterly access review, their new manager is asked to approve continued access to Salesforce admin permissions, Snowflake read access, and a legacy CRM role labeled “SalesOps_Admin_v2.” Faced with dozens of similar decisions, tight deadlines, and little context, the manager approves everything. The review is completed on time. The access persists.

As a result, risk compounds quietly. If an attacker later compromises the account, those lingering admin permissions provide a direct path into sensitive systems, and the activity may appear legitimate because the access was formally approved. By the time unusual behavior is detected, the damage may already be done, hidden behind what looks like valid access.

This article takes a closer look at what User Access Reviews are, why they often fail in enterprise environments, and what actually improves them, including how teams can evolve beyond periodic certification to continuous identity governance.

What Are User Access Reviews and Why Do Organizations Use Them?

User Access Reviews, sometimes referred to as Access Certifications, are formal review processes used to confirm that individuals retain the right level of access to systems, applications, and data.

In modern environments, access needs change constantly. People join teams, move roles, take on short-term projects, and leave. Systems are added, deprecated, or reconfigured. Without a mechanism to revisit access decisions, privileges tend to accumulate.

UARs counter that drift before it turns into persistent risk, making them a key control for security and compliance programs. Security teams rely on them to reduce unnecessary access, regulators expect them, and auditors ask for evidence.

But UARs are much more than box-checking exercises: They’re crucial for combatting the consequences of outdated/over-permissive access, which include an increased blast radius, lateral movement opportunities, and data exposure. Left unchecked, excessive access can also undermine segregation of duties, increase the likelihood of insider misuse, and complicate incident response when permissions are overly broad or poorly documented.

What Do You Actually Review During a UAR?

On a technical level, a User Access Review usually evaluates three core elements:

- First, the user or identity. This may include employees, contractors, service accounts, or other non-human identities. Reviewers need to understand who the identity represents and their current role.

- Second, the application or system. This could be a SaaS tool like Jira or Workday, a cloud service such as AWS, or an internal application. Each system has its own access model and risk profile.

- Third, the entitlements or permissions. These are certain roles, groups, or privileges granted, such as “Jira Project Admin,” “AWS IAM PowerUser,” or “NetSuite AP Clerk.”

A typical review asks an approver to confirm whether each combination of user, system, and entitlement is still required. Teams often formalize this process using a standardized User Access Review checklist to ensure reviews are consistent across systems.

How Are UARs Usually Run?

Most organizations run User Access Reviews as periodic campaigns on a quarterly, semiannual, or annual basis. Security or identity teams first collect access data from identity providers, SaaS applications, and cloud platforms. The data is grouped by user or application and routed to managers, application owners, or both, depending on the organization’s review model.

In manager-led reviews, line managers are asked to certify all access for their direct reports. In application-owner models, system owners validate entitlements for all users of their application. Two-tier approaches combine both, often starting with the manager and escalating higher-risk access to application owners.

In many enterprises, these reviews are still driven by spreadsheets exported from systems that are then emailed to reviewers, tracked manually, and reconciled at the end of the cycle. Evidence is archived for audit purposes.

This model made sense when environments were smaller and change was slower. Today, it struggles to keep up.

Why Do User Access Reviews Break Down in Practice?

In most enterprises, access reviews fail not because teams ignore them but because the process buckles when it meets real-world complexity.

Specifically, ineffective UARs are defined by a lack of context, unclear ownership, a “rubber-stamping” mentality, suboptimal evidence collection, and a lack of real-time coverage.

Why Is There So Little Context in Reviews?

The most common UAR failure mode is a lack of context. Reviewers are asked to make decisions without understanding what the access enables.

A manager reviewing access for an engineer might see roles like “AWS_ReadOnly,” “AWS_PowerUser,” and “CustomPolicy_ProdOps.” Without visibility into what those roles allow or how they are used, the safest path becomes approval.

Who Actually Owns Access Decisions?

Ownership ambiguity compounds UAR's other failure points. In many enterprises, it’s unclear who is accountable for access: managers, application owners, or security teams. This can lead to access decisions that are delayed, inconsistently applied, or approved without meaningful inspection.

Manager-only reviews are common, but managers may not understand application-specific permissions. App-owner reviews improve technical accuracy, but app owners may not know whether access matches a user’s role.

Organizations that require approval from both the manager and the application owner improve coverage but also increase friction and review fatigue.

Why Do Reviews Turn Into Rubber Stamping?

Faced with long lists and tight deadlines, many reviewers default to approving everything. After all, reviewers are rarely rewarded for revoking access, but they are penalized socially or operationally when revocations cause disruption. Over time, this creates a culture in which UARs are seen as a compliance chore rather than a security control.

What Makes Evidence Collection So Hard?

Collecting access data across SaaS, cloud, and on-prem systems is difficult. Entitlements change. Integrations fail. Access lists go stale before reviews start. This means data is often inaccurate, incomplete, or inconsistently labeled.

Inaccuracies can be introduced downstream as well. After the review, teams must package evidence for auditors: who reviewed what, when decisions were made, and what actions were taken. In spreadsheet-driven workflows, this is a manual and error-prone process.

Why Is the Security Value So Short-Lived?

Even when reviews are completed carefully and on time, their impact fades quickly in dynamic environments. As we’ve seen, access changes every day as people switch roles, join new projects, or gain temporary permissions that are never revisited.

By the time a quarterly or annual review is finished, parts of it are already outdated. New access has been granted, old access has lingered, and the risk profile has shifted. The end result? Point-in-time reviews reassure auditors but only provide limited, short-lived protection for the organization.

What Actually Improves UARs?

For many teams, improving reviews means rethinking the tools they rely on. Modern User Access Review software shouldn’t just collect approvals; it should help reviewers understand risk, usage, and context in a single place. This is how organizations can start to automate User Access Reviews without sacrificing decision quality.

To understand the difference robust tooling makes, let’s walk through the principles that actually improve reviews:

- Full Context: In a mature enterprise UAR cycle, access data is normalized across SaaS, cloud, and internal systems so reviewers aren’t working from fragmented exports. A manager reviewing an engineer’s access can see their current role, recent access usage, and whether permissions are common for peers in similar roles. For high-risk systems, application owners are brought in when technical judgment is required.

- Iterative Improvements: When revocations happen, they aren’t treated as one-off cleanups. They become signals. If multiple users lose the same entitlement, that role definition is likely too broad. If access lingers after role changes, joiner-mover-leaver workflows need to be adjusted. Over time, this feedback loop reduces review volume and improves the quality of upstream access.

- Cross-Team Alignment: Security and business teams need a common understanding of what access means and why it matters. Without that shared understanding, reviews become mechanical exercises rather than informed risk decisions.

Taken as a whole, these patterns explain why minor process tweaks tend to have limited impact. Real improvement comes from an approach that scales as the organization evolves.

How Does Linx Security Approach User Access Reviews?